Read the latest

News, Events & Articles

Insights

DIER Launches Employment Agencies Web Portal

If you are you a recruitment agency in the practice of recruiting persons for em...

Read more

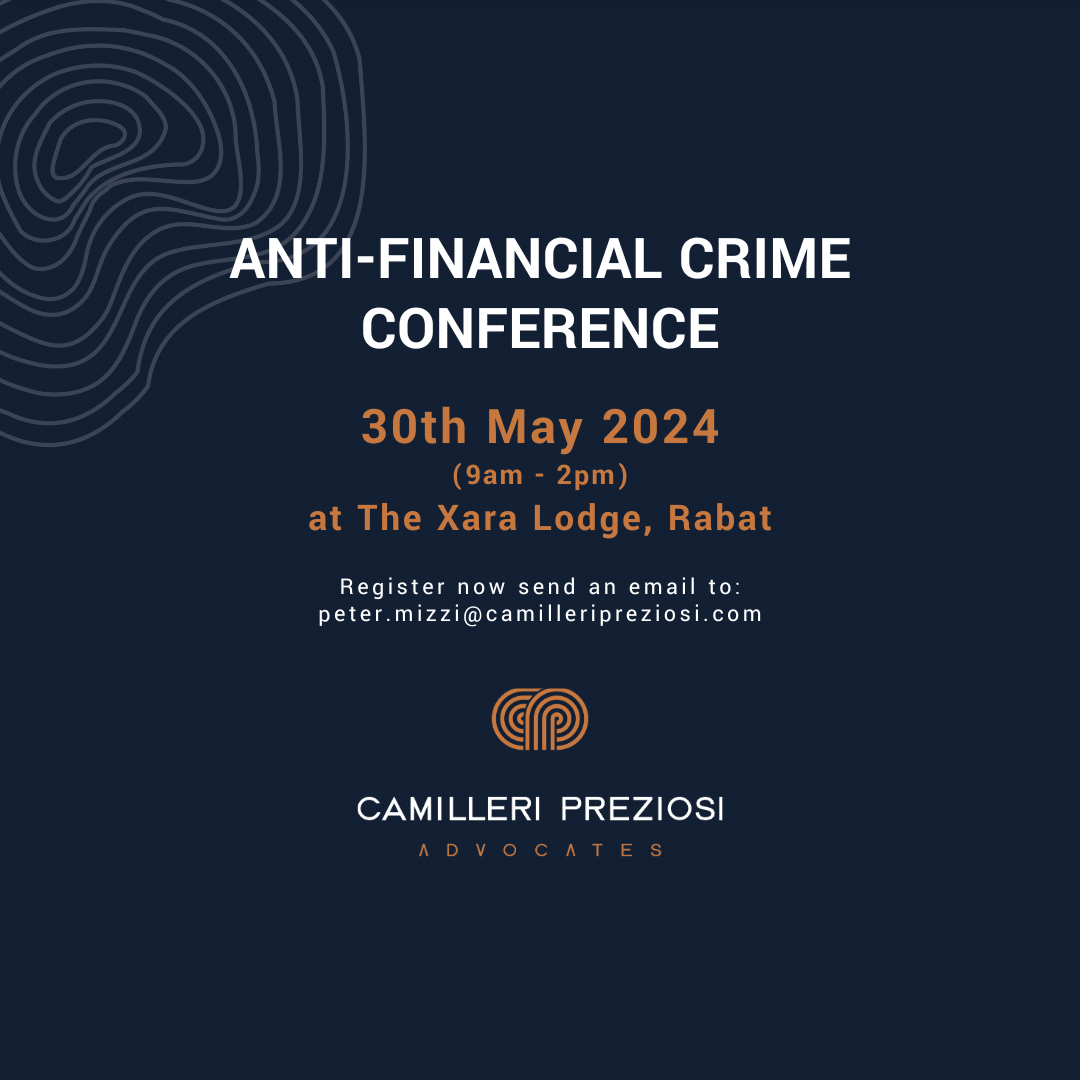

Anti-Financial Crime Conference, 2024

We are pleased to announce that registration is now open for our upcoming Anti-F...

Read more

Camilleri Preziosi Maintains Top Rankings in Legal 500 EMEA 2024 Edition

We're thrilled to announce that Camilleri Preziosi has once again upheld its sta...

Read more

Construction and Real Estate: Navigating the recent developments in Construction Law.

A series of reforms aimed at regulating the construction field&nb...

Read more

Save the Date: Half Day Anti-Financial Crime Conference

Camilleri Preziosi Advocates invites you to join local regulatory authorities an...

Read more

Corporate Tax 2024 Practice Guide | Chambers and Partners

Donald Vella, Kirsten Debono Huskinson and Gabriella Chircop have contributed, o...

Read more

Top ranking in Tax awarded by Chambers and Partners Europe 2024

The Tax practice team headed by partner Donald Vella and assisted by partner Kir...

Read more

Top ranking in Corporate/Commercial awarded by Chambers and Partners Europe 2024

Our Corporate/Commercial practice team led by Louis de Gabriele, Donald Vella, A...

Read more

The Chambers & Partners Europe Legal Guide 2024 – Camilleri Preziosi retain Leadership Position

We are delighted to have maintained our top ranking, granted by the prestigious ...

Read more